Path Planning with Reinforcement Learning (RL)

Simulation Project with Python

Quick Summary

This project aims to test various reinforcement learning (RL) algorithms for the global path planning of a mobile robot. The environment is designed based on the popular WALL-E animation, and the evaluated algorithms include Q-learning, SARSA, TD(0) learning, and Double Q-learning. Temporal Difference (TD) learning combines principles from Monte Carlo methods and dynamic programming (DP).

Technical Stack

🔧 Key Software & Frameworks:

- Tkinter (custom grid-world GUI)

- NumPy

- Pandas

- Matplotlib

🧠 Methods & Algorithms:

- Reinforcement Learning (RL)

- Q-Learning

- SARSA

- TD(0) learning

- Double Q-Learning Markov Decision Process (MDP) modeling

💻 Languages:

- Python

Environment 1

The environment is created based on the popular WALL-E animation. In this environment, WALL-E aims to reach the goal, represented by the EVE robot, while avoiding numerous obstacles along the path.

The environment size is 15 × 15, where each grid cell is 40 × 40 pixels, and there are 52 obstacles within the map. Aside from the two robots (WALL-E and EVE), the obstacles include trees, buildings, garbage, road signs, a plant in a boot (inspired by the animation), and a Rubik’s Cube. The upper-left corner of the environment, which represents the agent’s starting position, is at (0, 0). Movement to the right and downward corresponds to the +X and +Y directions, respectively. For example, moving two steps to the right and one step downward places the agent at position [80, 40].

The environment is implemented using Tkinter, a standard Python interface to the Tcl/Tk GUI toolkit. Additionally, the Pandas library is used for handling tabular data in the algorithms, NumPy is employed for scientific computing, and Matplotlib is used for visualization. The figure below shows a screenshot of the environment. The placement of obstacles and the blocked area around the goal are designed to make finding an optimal path challenging for the agent.

This reinforcement learning environment is modeled as a Markov Decision Process (MDP), where the state, action, and reward sets are denoted by S, A, and R, respectively. The environment dynamics are defined by the probability distribution p(s′,r/s,a) over states, actions, and rewards. However, the testing environment is deterministic, and no stochastic actions are involved.

During training, the agent (WALL-E) can move up, down, left, or right to reach the final goal. Each time the agent collides with an obstacle, it receives a reward of −5 and is reset to the initial position. Reaching the goal yields a significant reward of +100, while all other movements receive a reward of zero. Inspired by the animation, WALL-E’s interest in the Rubik’s Cube is modeled as an additional motivation: although it is not the goal, interacting with it results in a reward of −1. This problem setup provides a suitable benchmark for evaluating popular RL algorithms, given the number of obstacles, the environment size, the complexity around the goal, and the presence of intermediate motivations along the path.

Environment 2

Cliff Walking problem

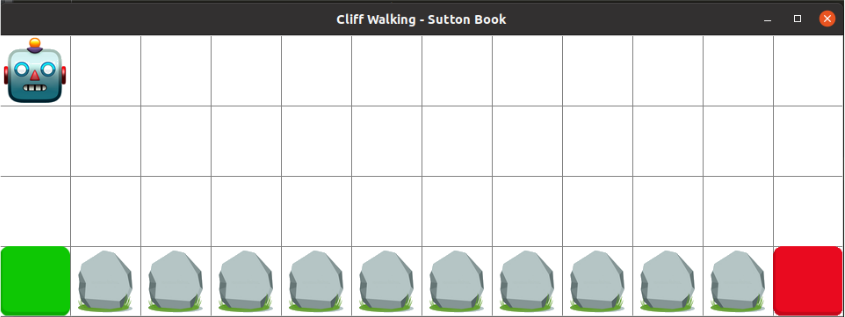

This problem is derived from “Reinforcement Learning : An Introduction” book by Andrew Barto and Richard S. Sutton. The environment is similar to figure below. This is a standard undiscounted, episodic task, with start and goal states and the usual actions causing movement up, down, Optimal path right, and left. The reward is -1 on all transitions except those into the region marked The Cliff. Stepping into this region incurs a reward of -100 and sends the agent instantly back to the start.

The starting point is a green square, and the goal is reaching to the red one. Stones show the cliffs, and the agent is a robot that wants to plan an optimal path. In order to have a comprehensive analyse, all the mentioned algorithms (Q-learning, SARSA and Double Q- learning) are tested in this environment. The environment and algorithms variables are as follow : action space = 4 = [’up’, ’down’, ’right’, ’left’], α = 0.9, γ = 0.9, ε = 0.1 1 , decay factor = 0.999, number of episodes = 500.

Materials

– A complete description of the simulation setup and results is provided in this document: PDF report.

– The source code is available on GitHub at the following link: GitHub repository.