TurtleBot3 Navigation based on RL with Sending Goals to NavStack

Simulation project with ROS

Summary

To use this repository, you need to place the files in specific locations and then run the system as instructed. The objective of this project is to navigate a TurtleBot3 (Waffle model) using Reinforcement Learning (RL), without relying on onboard sensors. Navigation goals are generated and sent as sequences based on the learned policy.

Note: This program is a simplified implementation combining RL, navigation, and goal sending in ROS. It is designed to test fundamental RL concepts alongside robot navigation. The framework can easily be extended to other RL methods or environments. You are free to use, modify, and share this code without any restrictions.

Key Tools & Methods — Technical Stack

🔧 Key Software & Frameworks:

- ROS (Robot Operating System)

- ROS Navigation Stack (NavStack)

- ROS action interfaces (goal-based navigation)

- Gazebo simulator

🧠 Methods & Algorithms:

- Reinforcement Learning (RL)

- Q-Learning (tabular)

💻 Languages:

- Python

Q-Learning–Based Navigation

In this section, I explain the Q-learning–based navigation approach and provide a brief overview of the method. For a deeper understanding of reinforcement learning or Q-learning, a wide range of resources is available online.

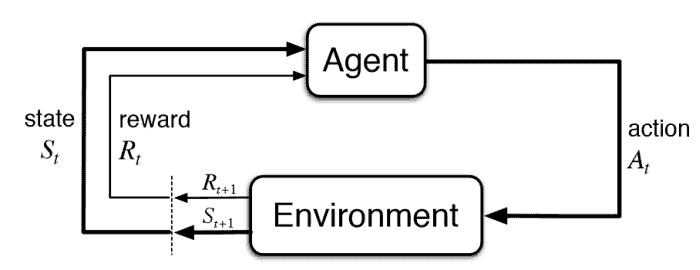

Reinforcement Learning is a branch of machine learning inspired by behaviorist psychology, focusing on how an agent can take actions in an environment to maximize cumulative rewards. In this framework, the agent has no prior knowledge of the environment and learns solely from experience. The agent operates within a Markov Decision Process (MDP), and its performance is evaluated using two functions: the value function (state value) and the Q-function (action value). The value function estimates the desirability of states under a given policy, while the Q-function represents the expected return of state–action pairs.

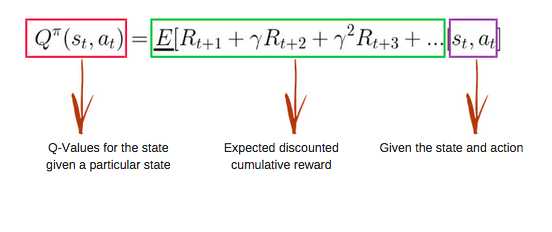

Action-value methods aim to learn Q-values, which capture the expected reward for taking a specific action in a given state and following a policy thereafter. One such method is Q-learning, where the agent maintains a Q-table, denoted as Q[S, A], with S representing states and A representing actions. The Q-table is updated at each iteration after executing an action and receiving a reward, following the Bellman update rule.

Reward Design and Environment

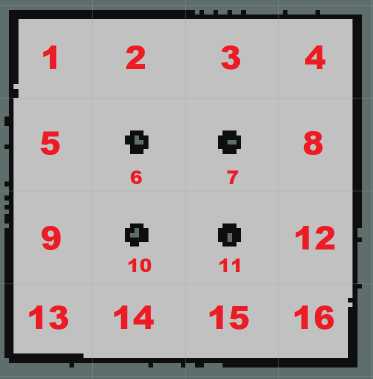

Since the environment used in this project is a discrete grid-based world, rewards are defined accordingly. The working environment consists of 16 areas and 4 obstacles, though other environments with different configurations can also be used.

Each area is assigned a unique number, and the robot determines an optimal path from a starting area to a target area without using any sensors. When the robot moves to a neighboring area, it receives a reward of +1. If the neighboring area contains an obstacle, the robot receives a −10 reward. All other transitions receive a reward of 0. The goal location has a reward of +900. These reward values and structures can be modified depending on the environment design.

For example, if the robot starts in area 1, it receives a +1 reward for moving to areas 2 or 5, a −10 reward for moving to area 6, and 0 for other transitions. This reward structure guides the robot toward safe and optimal paths.

Learning and Path Generation

The Q-table is initialized as a 16 × 16 zero matrix and is updated over multiple episodes. The user specifies the starting and target locations, and the robot learns an optimal path between them while avoiding obstacles. The resulting path is generated as a sequence of goals, which is explained in the next section.

In summary, environment locations are mapped to discrete area indices, and the Q-table is iteratively updated using the Bellman equation over a predefined number of episodes. Once training is complete, the path corresponding to the highest Q-values is selected as the optimal route from the start to the goal. The implementation can be reviewed in the qlearning.py file.

Sending a Sequence of Goals to ROS

After computing the optimal path using Q-learning, the robot’s intermediate positions are sent as sequential goals to the ROS Navigation Stack. This allows the TurtleBot3 to navigate through the environment by following the learned path.

GitHub Repository

To access the source code for this project, please follow this link: Click Here!